I often hear variations of this statement at the beginning of each semester. Writing is not something students tend to associate with statistics, nor is it something that most stats faculty members have been formally trained to teach. However, the ability to create and critique written communication involving data and statistics is becoming increasingly important.... Continue Reading →

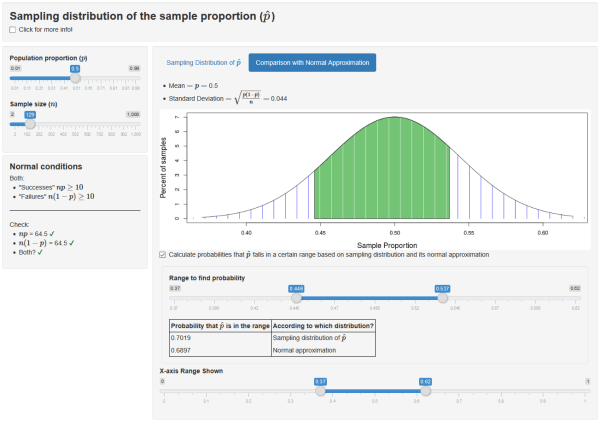

Ooh, Shiny!: R Shiny apps as a teaching tool

Interactive web applications (or apps), such as the Rossman-Chance collection, are popular tools for teaching statistics because they help illustrate fundamental concepts such as randomness, sampling, and variability through dynamic visualizations. The StatKey collection of apps created for the Lock5 textbook series to demonstrate and perform simulation-based inference is another example1. Historically, despite the utility... Continue Reading →

Specifications-Grading: An Example

Sometimes Specifications-Grading (Nilson, 2015) can feel like cooking – I may have all the ingredients, but it doesn’t mean I can turn it into an edible product. Bouncing ideas off other colleagues has been extremely beneficial. In this post, I will discuss an implementation I used for an intermediate statistics course. Setting up the Hurdles... Continue Reading →

Specifications-Grading: An Overview

Motivation What is the least enjoyable part about being a professor? For me, the answer is easily “grading.” For years I dreaded the whole process – determining whether a response was worth 4 points or 5, ensuring consistency across students, and arguing over partial credit instead of discussing course content. Opposite this dread was the knowledge that one... Continue Reading →

Icebreakers! (not the gum)

To start off this post, it’s probably fitting to quote a Duran Duran song (1990): “The lasting first impression is what you're looking for.” Besides starting with the usual housekeeping on the first day of class, why not set the tone for the course by providing students with a glimpse into the classroom environment as... Continue Reading →

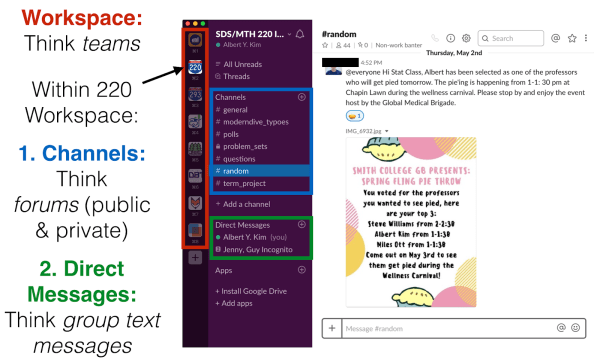

Slack for (A)synchronous Course Communication

You might have heard of Slack before. But what is it? Is it email? Is it a chat room? Slack describes their flagship product as a “collaboration hub that can replace email to help you and your team work together seamlessly.” In this blogpost, we’ll describe how we’ve been using Slack for asynchronous course communication,... Continue Reading →